AI Reveals Zoom’s Privacy Risks

AI Reveals Zoom’s Privacy Risks

July 7, 2020

Homeland & Cyber Security, Robotics & High-Tech

VentureBeat — New BGU research uncovers the ease with which personal information including face images, age, gender, and names can be extracted from public screenshots of Zoom video conference meetings.

A newly published study coauthored by Dima Kagan, Dr. Galit Fuhrmann Alpert and Dr. Michael Fire, of BGU’s Department of Software and Information Systems Engineering, concludes that a combination of image processing, text recognition, and forensics enabled them to cross-reference Zoom data with social network data, demonstrating that meeting participants might be subject to risks they aren’t aware of.

As social distancing and shelter-in-place orders motivated by the pandemic make physical meetings impossible, hundreds of millions of people around the world have turned to video conferencing platforms as a replacement.

But as the platforms come into wide use, security flaws are emerging — some of which enable malicious actors to “spy” on meetings.

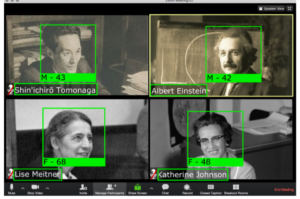

An example of a screenshot provided by the BGU research team, showing gender, age, face, and username.

BGU researchers first curated an image data set containing screenshots from thousands of meetings by using Twitter and Instagram web scrapers, which they configured to look for terms and hashtags like “Zoom school” and “#zoom-meeting.”

They filtered out duplicates and posts lacking images before training and using an algorithm to identify Zoom collages.

The researchers next performed an analysis of each Zoom screenshot beginning with facial detection.

Using a combination of open source pretrained models and Microsoft’s Azure Face API, the BGU research team was able to spot faces in images with 80% accuracy; detect gender; and estimate age (e.g., “child,” “adolescent,” and “older adult”).

Moreover, they uncovered a freely available text recognition library, which allowed them to extract 63.4% of usernames from the screenshots correctly.

Cross-referencing 85,000 names and over 140,000 faces yielded 1,153 people that likely appeared in more than one meeting, as well as networks of Zoom users where all the participants were coworkers.

This illustrates that not only individuals’ privacy is at risk from data exposed on video conference meetings, but also the privacy and security of organizations.

“We demonstrate that it is possible to use data collected from video conference meetings along with linked data collected in other video meetings with other groups, such as online social networks, in order to perform a linkage attack on target individuals,” say the coauthors. “This can result in jeopardizing the target individual’s privacy by using different meetings to discover different types of connections.”

To mitigate privacy risks, the researchers recommend video conference participants choose generic pseudo-names and backgrounds.

They also suggest that organizations inform employees of video conferencing’s privacy risks and that video conference operators like Zoom add “privacy” modes that foil facial recognition, like Gaussian noise filters.

“In the current global reality of social distancing, we must be sensitive to online privacy issues that accompany changes in our lifestyle as society is pushed towards a more virtual world,” the BGU coauthors add.